The Next Step in HD TV (Long Post)

Today I visited the Open House for NHK labs in Setagaya to get a sneak peek at the new “8K” UHDTV (Ultra High Definition TV) standard, known in Japan and Super Hi-Vision. They had their 145“ super-LCD screen going, in full 7680 x 4320-pixel glory.

The system is not just 16 times sharper than your latest-model HDTV; aside from having 16 times the pixels, it’s also progressive scan (not interlaced), and it’s got a refresh rate of 120 Hz. In short, it looks great.

Confused by the tech talk? Let me see if I can explain it.

First, let’s begin with some basic display vocabulary: scan, scan lines, interlaced and progressive scan, refresh rate, pixels, resolution, and aspect ratio. Let’s also go back to the earliest standard TV sets as well.

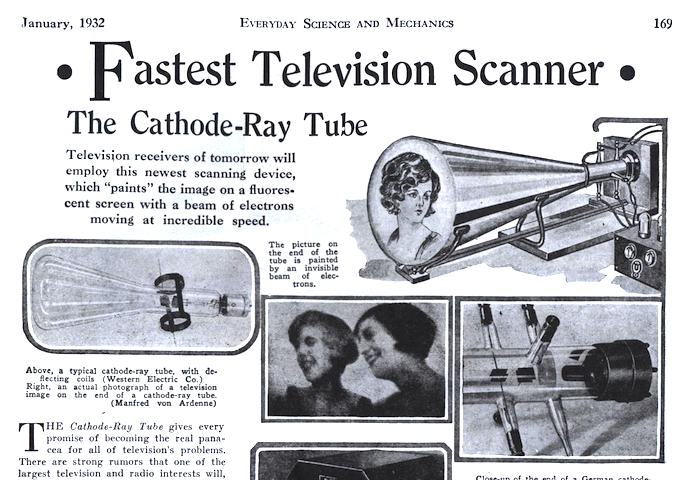

We refer to scanning in a television because of how the century old (!) TV technology works.

That would be a CRT or Cathode Ray Tube. This was a glass vacuum tube with up to three electron guns (red, green, and blue for color) in the back. These guns would fire electrons at a phosphor (light-emitting) screen, in a rectangular shape called a raster.

If none of that makes any sense to you, then forget it. Just remember that the guns in the back of the tube fire energy at the screen to make it light up. But they do so in a pattern. They start at the top left, and slowly go left to right, painting a single line of the picture across the screen. When the right end of the line is finished, the guns would go back to the left side and start painting the next line. They do this over and over again, through hundreds of lines. All this in a fraction of a second.

This process was called scanning; each line was a scan line. When the guns finished at the lower-right corner of the screen, a single scan was complete. The number of scans per second is measured in hertz (Hz).

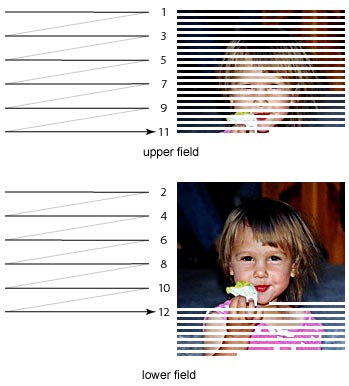

With the technology available in the mid-20th century, scanning each line in order didn’t work well; the picture did not show motion well, and there was flicker. As a result, they came up with a display method called interlaced scan. It fixed these problems, though it also had a disadvantage: it is not as sharp as it could be; small text, for example, often appears fuzzy.

Interlaced scan means that the guns only painted every other line in each scan–that is, on the first scan, it would paint lines 1, 3, 5, 7 and so on; on the following scan, it would fill in the missing even-numbered lines, 2, 4, 6, 8 and so forth. In this way, a single full image took two scans to complete.

The number of scans per second was called the refresh rate. This was set at 60 Hz; because of interlaced scan, this meant that 30 frames per second could be shown.

Interlaced scan was not the only way to show an image; progressive scan paints an entire image, all the scan lines, in one scan. Once the problems with motion and flicker were resolved, progressive scan was used in computer monitors, giving them a much better image.

The number of scan lines–the resolution–also had to be decided. The television most people grew up with originated in the 1940’s and 50’s. In North America, the NTSC (National Television System Committee) settled on a standard of 525 horizontal scan lines for the TV, although only about 480 lines are visible, and the other 45 lines are used for other information, including closed captions.

This picture is equivalent to an image on your computer screen 480 pixels tall, the vertical resolution. The horizontal resolution ranges from 640 to 720 pixels, depending on the type.

The aspect ratio (horizontal-to-vertical ratio) is 4:3, although a 720 x 480 screen would be 3:2.

OK, now that we’re through with all that, what did the old NTSC standard of 480 lines look like? Well, here’s an image with 480 ”lines“ of resolution in the NTSC aspect ratio:

Looks OK, doesn’t it? However, there’s a catch–the image you see above is shown in progressive scan, so it looks sharper than it should. Still, that’s fairly close. This is what we used to think of as a clear, sharp TV image.

However, there’s another hitch: you’re looking at it in a very small space.That image might occupy only as much as 7 inches diagonally. Blow up the same image and paste it on a 40-inch TV, it won’t look so good.

We found this out at around the turn of the century, when the next-generation of TVs, called HDTV (High Definition TV) came out. (Japan calls this ”Hi-Vision.“) These TVs have a vertical line count of 1080. Since we use LCD screens, and they use pixels, we refer to the overall resolution as 1920 x 1080.

So before, we had 480i (480 lines interlaced); with HDTV, we got 1080i. That’s more than double the lines, and (because the screen has a wider aspect ratio) almost 7 times more information.

Now, I can’t show you an HDTV image on this screen, as it likely would be bigger than your display area (here’s such an image you can view separately). So instead let’s scale things down to about 1/3rd the height, or about 1/8th the area. Of the two images below, the one on top is the same 480i NTSC image scaled down, and below it, an HDTV (1080i) version of the same image. Were the two TVs to have the same ”pixel“ size, this is how they would compare. Note also the difference in aspect ratios:

As you can see, you’re getting a lot more image with HDTV, even if your newer TV doesn’t look that much bigger.

Now, look what an old 480 NTSC image would look like on a newer HDTV screen, with the 1080 image next to it for comparison:

The old NTSC image looks kind of fuzzy in comparison, doesn’t it? Now, keep in mind that you are looking at it on a progressive-scan screen at a small screen size! On a real HDTV, it would look even worse. That’s what you see on your new HDTV when they broadcast an old-timey teevee show!

So you can see that HDTV was a big improvement. Even more so was Blu-ray; instead of showing images in 1080i, Blu-ray shows them in 1080p. That’s the best quality you’ll see on your current TV. It also might even be better quality than some films shot on 35mm film in the old days, which is why Blu-ray doesn’t always seem to give you the ”best“ quality when you’re watching films from a long time ago.

Interestingly, George Lucas used a 1080p digital camera to shoot Star Wars: Attack of the Clones. While 35mm film can be better quality than 1080p, under some circumstances they are close enough that most people would not notice the difference, especially with processing that films go through.

So, is 1080 the end? Not by a long shot.

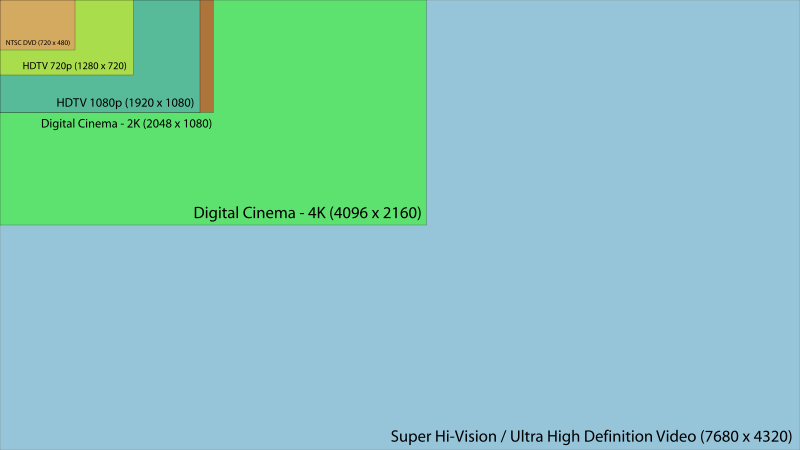

In fact, we’re now heading into perhaps as much as two generations beyond HDTV. They are referred to as 4K and 8K, or QFHD and UHD TV.

The 4K, or QFHD (Quad Full High Definition) is 3840 x 2160, which is exactly double the vertical and horizontal resolution of HDTV (also called ”FHD,“ or ”Full High Definition“), which gives it 4 times the pixels, or overall resolution. Thus the ”Quad“ label. Current HDTV has about 2,000,000 pixels (2 megapixels); 4K has more than 8,000,000, or 8 megapixels.

The ”4K“ label, by the way, does not come from the ”quad“ label; 4K comes from the rough number of horizontal pixels. 3840 is close to 4000, therefore we get ”4K.“

4K is just now becoming available; you can actually buy 4K Blu-ray players and 4K TV sets. HDMI cables are now capable of transmitting 4K video. And 4K is actually closer to a cinema standard–a movie shot in 4K video (as many are now) will look just as good as any shot on film.

However, there’s a catch: 4K TV sets (projectors, really) still cost at least $10,000, even if the 4K-ready Blu-ray players can be had for much cheaper. Oh yeah–there’s nothing to watch in 4K anyway. However, 4K might be good for HDTV-quality 3-D viewing, although that’s a limited use for expensive equipment.

Not that 4K won’t become cheaper and more available in the next few years. The problem is, by the time it catches on, it’ll already be obsolete.

You see, NHK here in Japan is working on 8K: a full 7680 x 4320 pixels, more than 33,000,000, or 33 megapixels. That’s more than 100 times the number of pixels on a pre-HD television set! Not only that, it’s progressive scan. And it scans 120 times a second (120 Hz), so you get sharpness even with motion that would blur on current TVs.

Still not impressed? Let me show you a scale showing all the different resolutions:

See that tiny orange scrap at top left? That’s your old NTSC TV set. The higher-quality version of it, with top DVD quality. The screen two levels down, the darker green one, marked ”HDTV 1080p“? That’s your current flat-screen set. The light green square is 4K, what is coming out right now. The largest light-blue square is Super Hi-Vision.

They say that it will be ready for broadcast from NHK’s satellites from 2020 (give or take a few years). By 2025 they expect to broadcast that over the Internet.

Not only that, the sound will improve. Today’s ”home theater“ systems include 5.1 surround sound, meaning there are five speakers surrounding the viewer, and a subwoofer for bass.

Super Hi-vision has a 22.2 sound system–yep, 24 speakers in all. 9 at the top of the room, 10 around the middle, and 3 normal speakers in front, accompanied by two subwoofers.

And still, we’re not finished. After all, you can’t just go and increase resolution by 16 times and expect it’ll still fit on the same media, right?

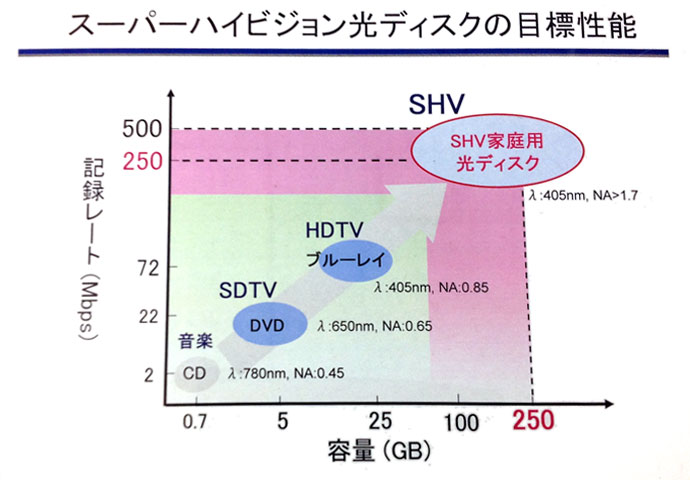

When DVDs were too small for recorded HDTV, we got Blu-ray, going from 4.7 GB for DVDs to 25 ~ 100 GB for Blu-rays. Even with compression, however, Super Hi-vision will require media that’s 250 GB in size, at least.

Now, Blu-ray might get there–it’s 25 GB per layer is already at 100 GB thanks to 4-layer discs, and 10-layer discs are not too far off. However, it’s not just the capacity: it’s the access speed. If the media can’t shoot out the video fast enough, it won’t work. And the guy I spoke to at NHK (several of them spoke pretty good English, not surprisingly) said that even with upgrades, Blu-ray just won’t cut it.

So NHK is looking into alternatives–like this:

Note the NHK disc is floppy, not unlike the opaque black mylar film used in the original floppies decades back. But this disc (which will probably be more firm when released) holds 100 GB per layer due to a lens process with blue lasers which halves the width of the beam, thus producing 4 times the capacity. A 4-layer SHV disc would hold 400 GB, more than enough for a SHV video.

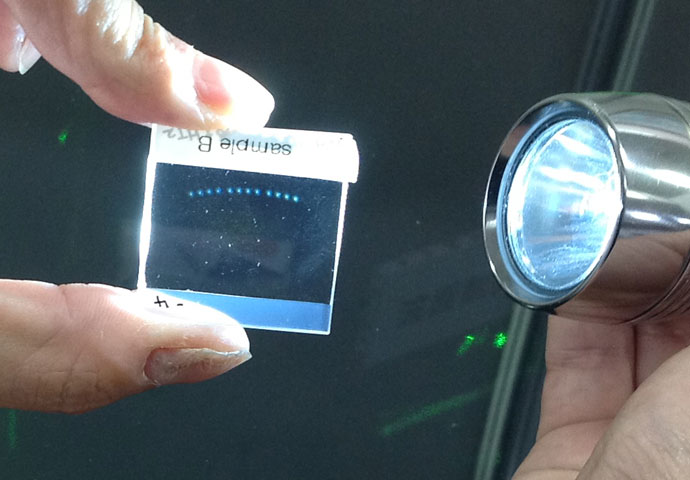

But that’s not all. They’re also working on holographic media:

See those tiny little dots? Each one is 10 MB of data. Or was it 100 MB? Frankly, I forget–I didn’t write it down. Whatever the case, the guy said that one of those inch-square plastic (glass?) chips would hold as much as a terabyte of data. He also said that it wasn’t reliable enough for data storage yet–but he did say that he expected it to be sold on the market within three years.

He even had a cool laser setup you could look at:

That disc in the middle is not the media–the square chip on it is.

Now, when I saw this, something immediately came to mind. Data storage media, in the form of thick plastic cards… where had I seen those before…?

Of course, they seemed to have far less capacity back then.

alas, the US’s cable system — essentially Comcast thanks to our wondrous Free Market®– can’t handle 1080p let alone quad density.

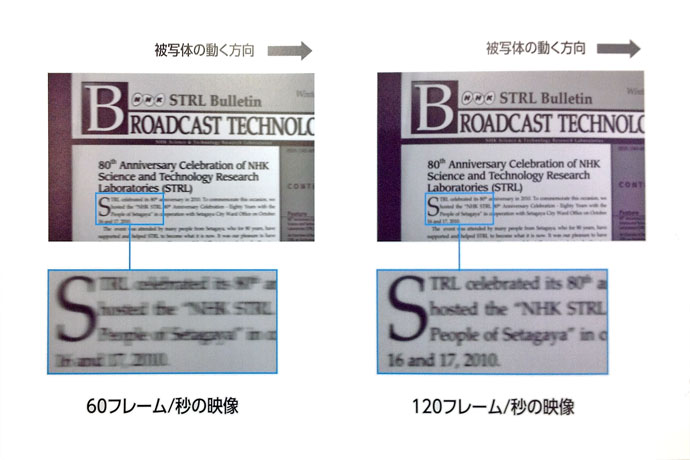

“And it scans 120 times a second (120 Hz), so you get sharpness even with motion that would blur on current TVs.”

From what I know, “blurring” isn’t that big a deal, things blur even at 120hz, and blur looks natural anyway.

Higher fps improves the consistency of the image when the camera is rotating and blur is excessive.

But here:

http://www.tvbeurope.com/newslettersportsbroadcast-content/full/super-hi-vision-at-120fps-for-olympics

there’s an alleged camera expert who says 300fps is the minimum acceptable for some reason so what do I know.

Negroponte’s Being Digital has an interesting discussion of NHK’s mistake going with analog signaling for their original MUSE system.

Also, this book:

http://www.amazon.com/Defining-Vision-Broadcasters-Government-Revolution/dp/0156005972

looks interesting. AFAIK, Japan had to discard their 1980’s technology when HDTV came out and start over.

I remember seeing the HD demonstrations in the Shinjuku post office. Which makes sense, since at the time the government was the Ministry of Post and Telecommunications.

My friend has as projection system that upconverts 1080p to quad-density via pixel-shifting:

http://newsroom.jvc.com/2011/09/new-jvc-home-theater-projectors-display-images-with-4k-precision/

$8000 but worth every penny. He’s got the 12′ movie screen to go with it, too.

The stupid thing is that the distribution model needs more innovation than the displays now. It’s obvious we need a la carte cable, instead of 250 channels of pre-scheduled crap, homes just need 1-4 channels of on-demand provisioning.

No real technological barrier, but one formidable financial one. Apple sponsored the digital audio download revolution, but it’s not entirely clear if they can win this next battle. Maybe they should buy Sony, and/or NHK LOL.

Actually, I think the greater excess is the pixel size; there’s a limit to how much detail we can see. For a TV around 50 or 60 inches at comfortable viewing distance, I have the feeling that 33 megapixels is already beyond what the human eye can discern. However, it may work better when used for 3D, where you lose resolution in exchange for the 3D effect.